Many light sources we come across today have a CRI rating. Most of us realise that the higher the number, the better the quality of light, but is it really that simple? What exactly is Colour Rendering Index, how is it measured and can we trust it as cinematographers? Let’s find out.

What is C.R.I.?

CRI was created in 1965 by the CIE – Commission Internationale de l’Eclairage – the same body responsible for the colour-space diagram we met in my post about How Colour Works. The CIE wanted to define a standard method of measuring and rating the colour-rendering properties of light sources, particularly those which don’t emit a full spectrum of light, like fluorescent tubes which were becoming popular in the sixties. The aim was to meet the needs of architects deciding what kind of lighting to install in factories, supermarkets and the like, with little or no thought given to cinematography.

As we saw in How Colour Works, colour is caused by the absorption of certain wavelengths of light by a surface, and the reflection of others. For this to work properly, the light shining on the surface in the first place needs to consist of all the visible wavelengths. The graphs below show that daylight indeed consists of a full spectrum, as does incandescent lighting (e.g. tungsten), although its skew to the red end means that white-balancing is necessary to restore the correct proportions of colours to a photographed image. (See my article on Understanding Colour Temperature.)

Fluorescent and LED sources, however, have huge peaks and troughs in their spectral output, with some wavelengths missing completely. If the wavelengths aren’t there to begin with, they can’t reflect off the subject, so the colour of the subject will look wrong.

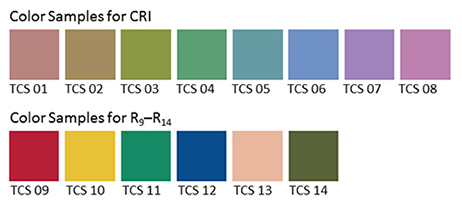

Analysing the spectrum of a light source to produce graphs like this required expensive equipment, so the CIE devised a simpler method of determining CRI, based on how the source reflected off a set of eight colour patches. These patches were murky pastel shades taken from the Munsell colour wheel (see my Colour Schemes post for more on colour wheels). In 2004, six more-saturated patches were added.

The maths which is used to arrive at a CRI value goes right over my head, but the testing process boils down to this:

- Illuminate a patch with daylight (if the source being tested has a correlated colour temperature of 5,000K or above) or incandescent light (if below 5,000K).

- Compare the colour of the patch to a colour-space CIE diagram and note the coordinates of the corresponding colour on the diagram.

- Now illuminate the patch with the source being tested.

- Compare the new colour of the patch to the CIE diagram and note the coordinates of the corresponding colour.

- Calculate the distance between the two sets of coordinates, i.e. the difference in colour under the two light sources.

- Repeat with the remaining patches and calculate the average difference.

Here are a few CRI ratings gleaned from around the web:

| Source | CRI |

| Sodium streetlight | -44 |

| Standard fluorescent | 50-75 |

| Standard LED | 83 |

| LitePanels 1×1 LED | 90 |

| Arri HMI | 90+ |

| Kino Flo | 95 |

| Tungsten | 100 (maximum) |

Problems with C.R.I.

There have been many criticisms of the CRI system. One is that the use of mean averaging results in a lamp with mediocre performance across all the patches scoring the same CRI as a lamp that does terrible rendering of one colour but good rendering of all the others.

Further criticisms relate to the colour patches themselves. The eight standard patches are low in saturation, making them easier to render accurately than bright colours. An unscrupulous manufacturer could design their lamp to render the test colours well without worrying about the rest of the spectrum.

In practice this all means that CRI ratings sometimes don’t correspond to the evidence of your own eyes. For example, I’d wager that an HMI with a quoted CRI in the low nineties is going to render more natural skin-tones than an LED panel with the same rating.

I prefer to assess the quality of a light source by eye rather than relying on any quoted CRI value. Holding my hand up in front of an LED fixture, I can quickly tell whether the skin tones looks right or not. Unfortunately even this system is flawed.

The fundamental issue is the trichromatic nature of our eyes and of cameras: both work out what colour things are based on sensory input of only red, green and blue. As an analogy, imagine a wall with a number of cracks in it. Imagine that you can only inspect it through an opaque barrier with three slits in it. Through those three slits, the wall may look completely unblemished. The cracks are there, but since they’re not aligned with the slits, you’re not aware of them. And the “slits” of the human eye are not in the same place as the slits of a camera’s sensor, i.e. the respective sensitivities of our long, medium and short cones do not quite match the red, green and blue dyes in the Bayer filters of cameras. Under continuous-spectrum lighting (“smooth wall”) this doesn’t matter, but with non-continuous-spectrum sources (“cracked wall”) it can lead to something looking right to the eye but not on camera, or vice-versa.

Conclusion

Given its age and its intended use, it’s not surprising that CRI is a pretty poor indicator of light quality for a modern DP or gaffer. Various alternative systems exist, including GAI (Gamut Area Index) and TLCI (Television Lighting Consistency Index), the latter similar to CRI but introducing a camera into the process rather than relying solely on human observation. The Academy of Motion Picture Arts and Sciences recently invented a system, Spectral Similarity Index (SSI), which involves measuring the source itself with a spectrometer, rather than reflected light. At the time of writing, however, we are still stuck with CRI as the dominant quantitative measure.

So what is the solution? Test, test, test. Take your chosen camera and lens system and shoot some footage with the fixtures in question. For the moment at least, that is the only way to really know what kind of light you’re getting.